Depth Perception

A 2D camera flattens the world — you can see what is in the scene, but not how far away it is. Depth perception solves this. There are three main approaches: stereo vision, structured light, and time-of-flight (LiDAR). Let's understand how each works and when to use them.

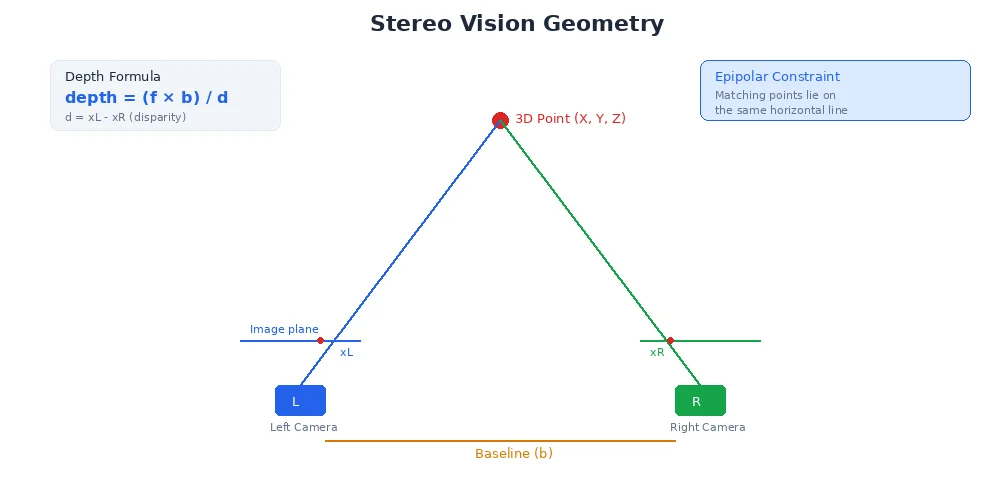

Stereo Vision: Two Cameras, One Brain

Humans have two eyes spaced apart. Your brain compares the two images and figures out depth through triangulation. Stereo cameras do the same.

How It Works

- Capture two images from cameras separated by a known distance (the baseline)

- Find matching points — the same object pixel in both images

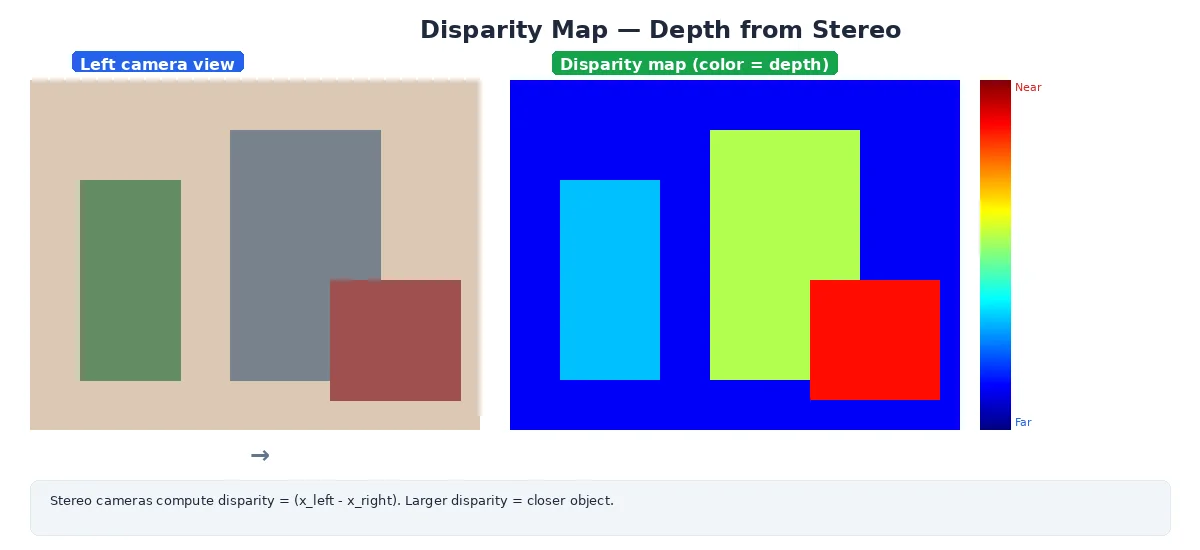

- Measure disparity — how much the pixel shifted between left and right images

- Calculate depth using geometry:

depth = (focal_length * baseline) / disparityThe bigger the disparity (larger shift), the closer the object.

# Simplified stereo matching

left_image = capture_left_camera()

right_image = capture_right_camera()

# For each pixel in the left image, find the matching pixel in the right

for y in range(height):

for x in range(width):

# Search along the same horizontal line (epipolar constraint)

disparity = find_match(left_image[y, x], right_image[y, :])

# Compute depth

if disparity > 0:

depth[y, x] = (focal_length * baseline) / disparity

else:

depth[y, x] = INVALID # No match found

Challenges

- Texture-less surfaces: A blank white wall has no unique features to match

- Occlusions: Objects visible in one camera but not the other

- Computational cost: Matching millions of pixels in real-time is expensive

- Calibration: Cameras must be precisely aligned and calibrated together

Stereo works best outdoors or in textured environments. It struggles in featureless areas (long hallways, empty rooms) where there's nothing unique to match between images.

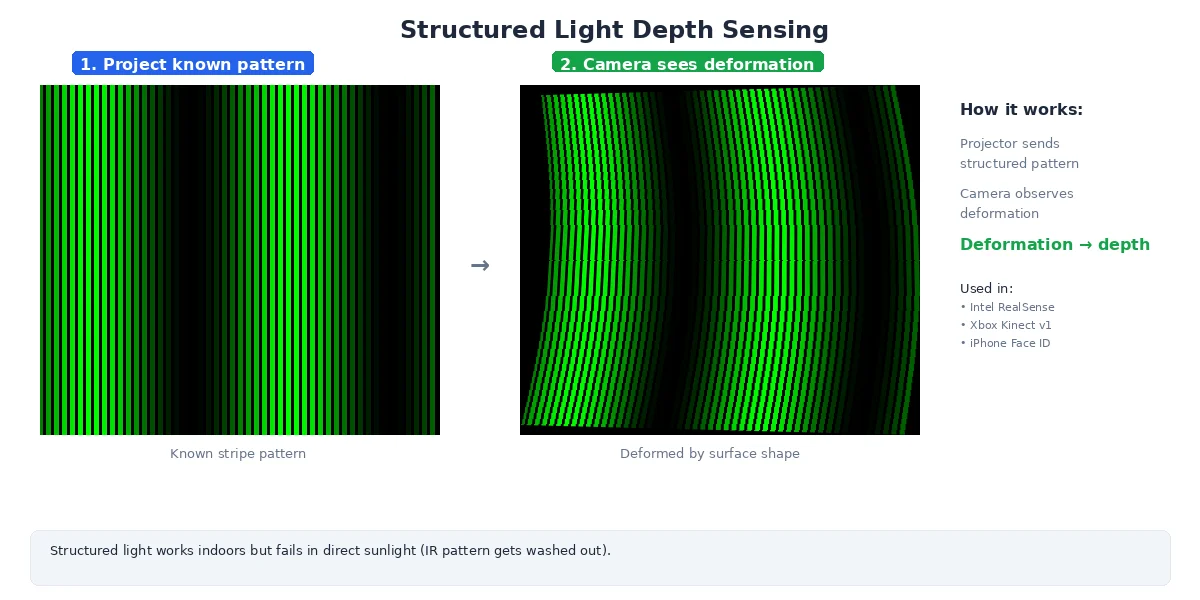

Structured Light: Project a Pattern

Instead of relying on natural scene texture, structured light sensors project a known pattern (dots, lines, or grids) onto the scene. The camera sees how the pattern deforms, revealing depth.

How It Works

- Project an infrared pattern (invisible to humans)

- Capture it with an IR camera

- Compare observed pattern to the known pattern

- Calculate depth from pattern distortion

Popular sensors: Intel RealSense, Kinect (original), iPhone Face ID.

Advantages

- Works on textureless surfaces — the projected pattern provides the texture

- Dense depth maps — every pixel gets a depth value

- Fast computation — pattern matching is simpler than stereo correspondence

Disadvantages

- Limited range — typically 0.5m to 5m (pattern gets too faint at distance)

- Fails in sunlight — bright IR light overwhelms the projected pattern

- Interference — multiple structured-light sensors in the same room interfere with each other

Don't use structured light sensors outdoors in daylight. The sun emits strong infrared light that washes out the projected pattern, causing depth readings to fail.

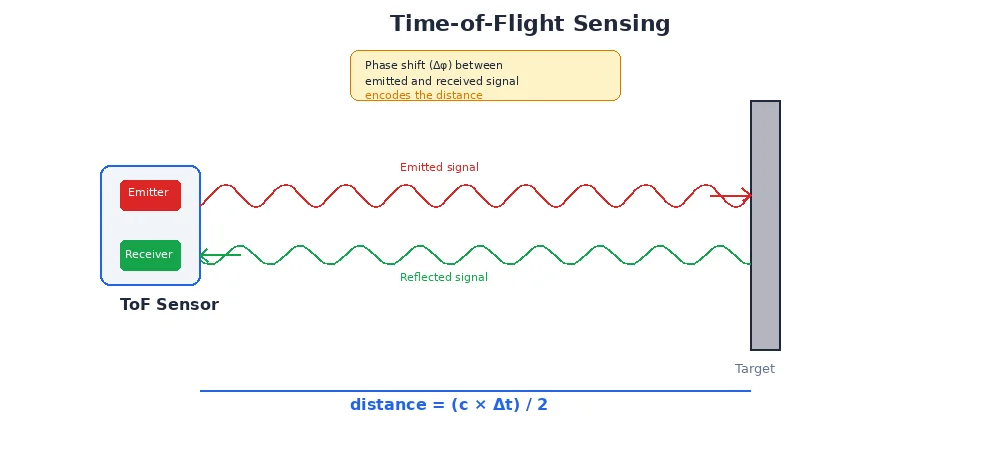

Time-of-Flight (ToF): Measure Light Speed

Some depth cameras (and all LiDAR) use time-of-flight: emit light, measure how long it takes to return.

How It Works (Depth Camera Version)

- Emit modulated infrared light from an array of emitters

- Capture the reflection with a sensor array

- Measure phase shift to determine distance (continuous-wave ToF)

- Output a depth image — one depth per pixel

This is essentially a "flash LiDAR" — instead of scanning a single beam, the whole scene is illuminated at once.

Advantages

- Works in any lighting (active sensor, like LiDAR)

- Fast — entire frame measured simultaneously

- No correspondence problem — each pixel measures its own depth

Disadvantages

- Lower resolution — typically 640×480 or less

- Multipath errors — reflections off shiny surfaces confuse the sensor

- Limited range — usually < 10m

# A depth image looks like a grayscale image, but pixel values are distances

depth_image = {

"width": 640,

"height": 480,

"encoding": "16UC1", # 16-bit unsigned int, 1 channel

"data": [

1500, # pixel (0,0): 1500mm = 1.5m away

1520, # pixel (0,1): 1.52m away

0, # pixel (0,2): invalid / no reading

# ... 640 * 480 = 307,200 depth values

]

}

# Convert depth image to 3D point cloud:

for y in range(height):

for x in range(width):

z = depth_image[y, x] / 1000.0 # mm to meters

if z > 0: # valid depth

# Use camera intrinsics to unproject

X = (x - cx) * z / fx

Y = (y - cy) * z / fy

Z = z

points.append((X, Y, Z))

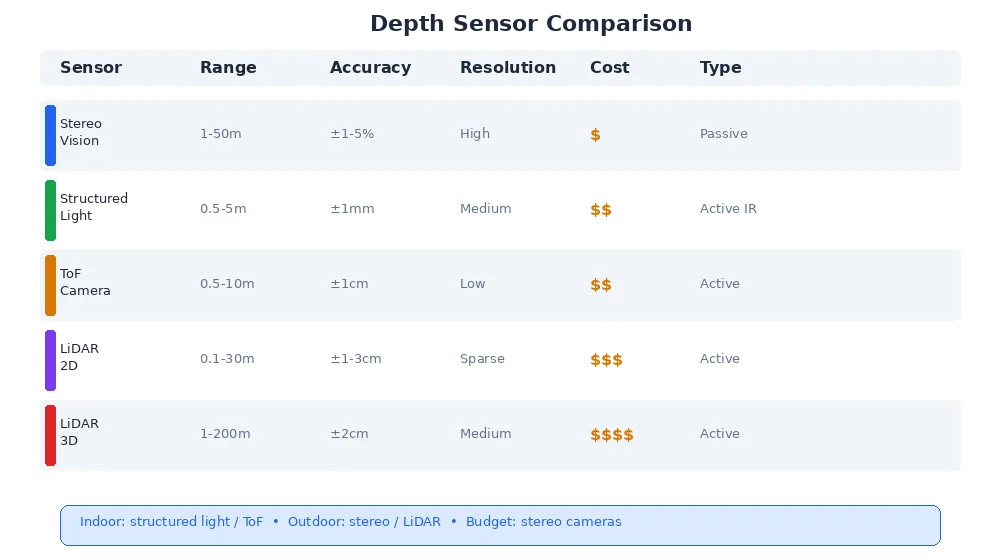

Comparing Depth Sensors

| Method | Range | Accuracy | Texture-less? | Sunlight OK? | Resolution | Cost |

|---|---|---|---|---|---|---|

| Stereo | 1-50m | ±1-5% | ❌ | ✅ | High | $ |

| Structured Light | 0.5-5m | ±1mm | ✅ | ❌ | Medium | $$ |

| ToF Camera | 0.5-10m | ±1cm | ✅ | ⚠️ | Low | $$ |

| LiDAR (2D) | 0.1-30m | ±1-3cm | ✅ | ✅ | Sparse | $$$ |

| LiDAR (3D) | 1-200m | ±2cm | ✅ | ✅ | Medium | $$$$ |

For indoor robots: structured light or ToF cameras work great (short range, dense depth, cheap). For outdoor robots: stereo or LiDAR (sunlight-resistant, longer range). For warehouse robots navigating aisles: 2D LiDAR is perfect (fast, cheap, sufficient for obstacle detection).

What's Next?

Now you can measure 3D geometry — but how do you recognize what you're looking at? The next lesson introduces object detection — finding and classifying objects in images using machine learning.