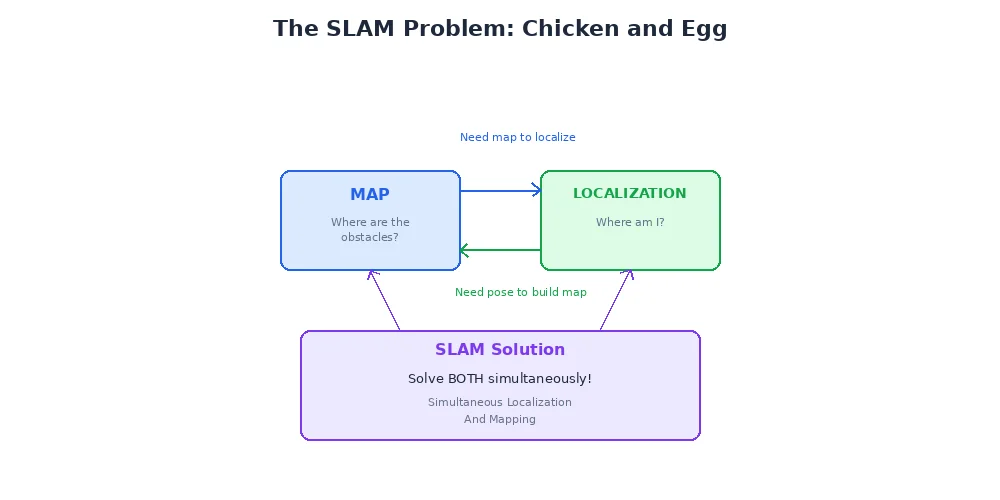

The Chicken-and-Egg Problem

Here's a riddle: to build a map, you need to know where you are. To know where you are, you need a map. So if you have neither... where do you start?

That's the SLAM problem — Simultaneous Localization And Mapping. The robot must build a map of an unknown environment while simultaneously figuring out its position on that incomplete map. It's like drawing a floor plan of a dark building while blindfolded, using only your footsteps and a flashlight to sense nearby walls.

SLAM is considered one of the fundamental problems in mobile robotics, and solving it robustly is what separates toy robots from serious autonomous systems.

Why SLAM is Hard

Let's break down the difficulty:

Circular Dependency

You need your position to add sensor data to the map in the right place. You need the map to figure out your position. Every measurement depends on every previous measurement — errors compound.

Sensor Drift

Odometry (wheel encoders, IMU) accumulates error over time. Walk 100 meters counting steps, and you might be off by several meters. The map you're building is based on these drifting position estimates.

Data Association

When the robot sees a corner, is it a new corner to add to the map, or one it saw 30 seconds ago? Getting this wrong creates duplicate landmarks or destroys your map.

Scale

A building might have thousands of landmarks. Tracking the relationships between all of them requires sophisticated data structures.

The breakthrough that made SLAM practical was realizing you don't need to maintain a perfect map constantly — you just need to track uncertainty and occasionally correct accumulated errors through "loop closure" (which we'll cover in the next lesson).

Two Main Approaches

SLAM algorithms fall into two broad categories:

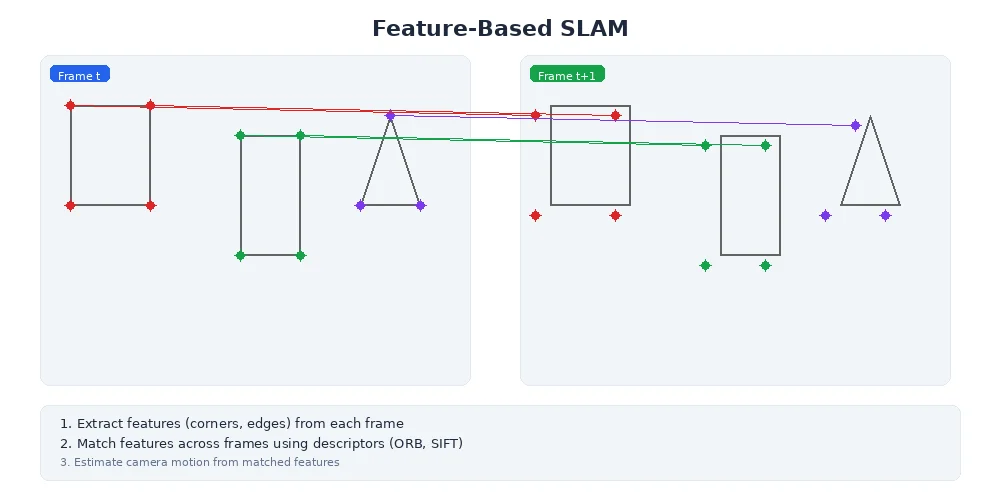

1. Feature-Based SLAM

The robot extracts distinctive landmarks (corners, edges, specific objects) from sensor data and tracks them as it moves. The map is a list of landmark positions.

How it works:

- Detect features in sensor data (camera: corners, edges; LiDAR: lines, planes)

- Match features across consecutive observations to track them

- Use feature positions to estimate robot motion

- Refine both robot trajectory and feature positions together

Pros:

- Compact maps (just landmark coordinates)

- Fast for sparse environments

- Good for visual SLAM with cameras

Cons:

- Requires distinctive features (fails in featureless hallways)

- Matching features across views is hard

class FeatureBasedSLAM:

def __init__(self):

self.landmarks = [] # List of (x, y) positions

self.robot_pose = Pose(0, 0, 0)

def process_scan(self, sensor_data):

# Extract features from sensor data

observed_features = detect_features(sensor_data)

# Match to known landmarks (or create new ones)

for feature in observed_features:

landmark_id = match_to_landmarks(feature, self.landmarks)

if landmark_id is None:

# New landmark

self.landmarks.append(feature.position)

else:

# Update robot pose using known landmark

correct_pose_estimate(self.robot_pose, landmark_id, feature)

# Update landmark positions based on refined pose

refine_landmark_positions()2. Graph-Based SLAM

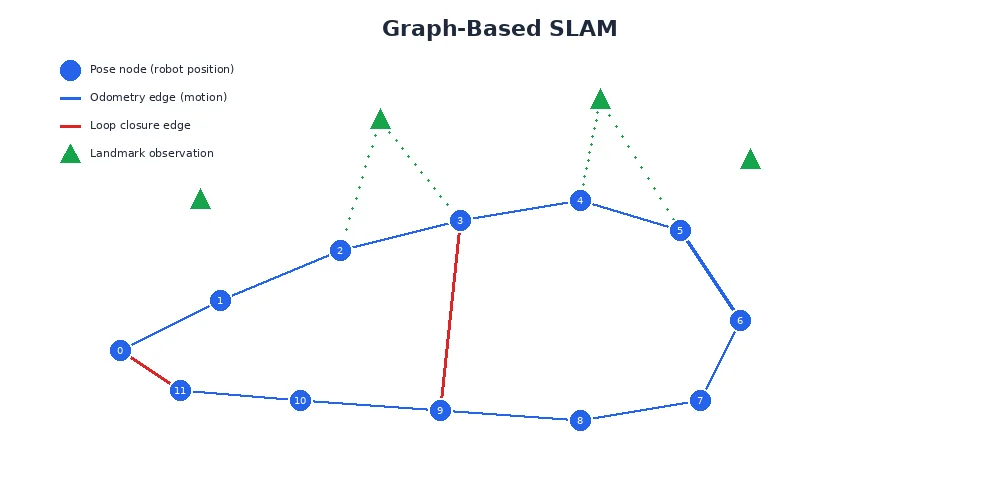

Instead of maintaining a single map, the algorithm builds a graph where nodes are robot poses at different times, and edges are spatial constraints (odometry measurements, landmark observations).

The magic happens during graph optimization — periodically, the algorithm adjusts all poses to make the constraints as consistent as possible. This is like adjusting a house of cards until all the pieces fit together without contradiction.

How it works:

- Add a node for each robot pose as it moves

- Add edges between consecutive poses (from odometry)

- When recognizing a previous location, add a "loop closure" edge

- Periodically optimize the graph to minimize constraint violations

- Build the map from the optimized trajectory

Pros:

- Handles large-scale environments

- Naturally incorporates loop closure

- Can produce occupancy grids or feature maps

Cons:

- Periodic optimization can be slow

- Requires good loop closure detection

Modern SLAM systems often combine both approaches — use features for tracking frame-to-frame, but maintain a pose graph for global optimization.

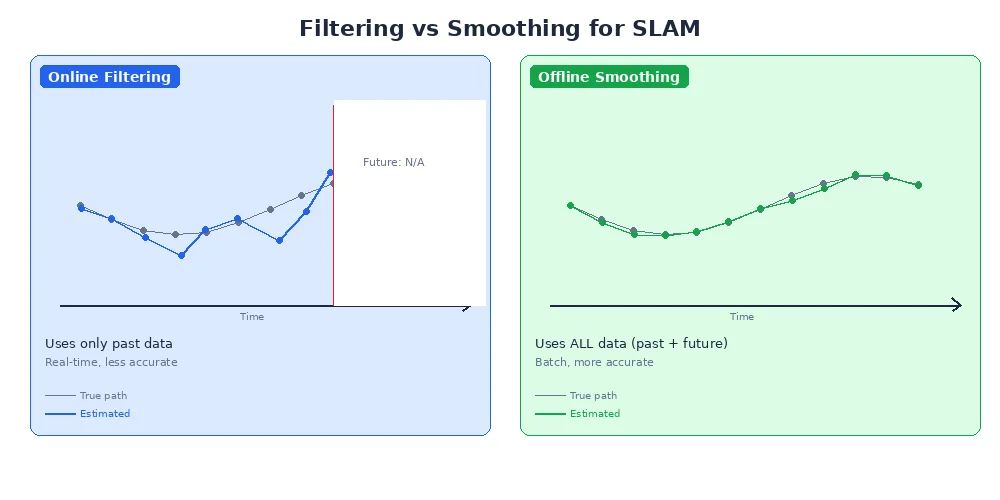

The Role of Filtering vs. Smoothing

There are two philosophies for handling SLAM uncertainty:

Filtering (EKF-SLAM, FastSLAM)

Maintain a probability distribution over the current robot pose and map. Update this distribution incrementally with each new measurement. Once a decision is made about past poses, it's locked in.

- Fast per-update

- Can't revise history

- Errors accumulate

Smoothing (Graph-Based SLAM)

Maintain a history of poses and constraints. Periodically re-optimize the entire trajectory, revising past poses if new evidence (like loop closure) suggests they were wrong.

- Slower periodic optimization

- Can correct past errors

- Better final map quality

Most modern SLAM systems use smoothing because loop closure (next lesson) is critical for long-term accuracy, and smoothing naturally incorporates it.

Visual SLAM vs. LiDAR SLAM

SLAM can use different sensors:

| Sensor Type | Pros | Cons | Common Use |

|---|---|---|---|

| Camera | Rich features, cheap, passive | Struggles in darkness, affected by lighting | Drones, AR/VR headsets |

| LiDAR | Works in any lighting, accurate depth | Expensive, can't read text/color | Self-driving cars, indoor robots |

| Both (Fusion) | Best of both worlds | Complexity, sensor synchronization | High-end autonomous systems |

Visual SLAM (using cameras) is called VSLAM and often uses feature matching (ORB-SLAM, PTAM). LiDAR SLAM often uses scan matching (ICP, Cartographer).

What's Next?

SLAM works surprisingly well for short-term mapping, but there's a catch: errors slowly accumulate as the robot explores. The solution is loop closure — recognizing when you've returned to a previously visited place. That's our next lesson, and it's the secret ingredient that makes long-term SLAM possible.